Why you should write your own LLM benchmarks — with Nicholas Carlini, Google DeepMind

Manage episode 436875880 series 3451473

AI Engineering is expanding! Join the first 🇬🇧 AI Engineer London meetup in Sept and get in touch for sponsoring the second 🗽 AI Engineer Summit in NYC this Dec!

The current online AI discourse only has two camps: those who think AI is going to revolutionize everything, and those who think it's all hype. Today's guest, Nicholas Carlini, a research scientist at DeepMind, argues that we should be focusing more on what AI can do for us individually, rather than trying to have an answer for everyone.

"How I Use AI" - A Pragmatic Approach

Carlini's blog post "How I Use AI" went viral (eg on HN) for good reason. Instead of giving a personal opinion about AI's potential, he simply laid out how he, as a deeply technical researcher, uses AI tools in his daily work. He divided it in 12 sections:

To make applications

As a tutor

To get started

To simplify code

For boring tasks

To automate tasks

As an API reference

As a search engine

To solve one-offs

To teach me

Solving solved problems

To fix errors

Each of the sections has specific examples, so we recommend going through it. It also includes all prompts used for it; in the "make applications" case, it's 30,000 words total!

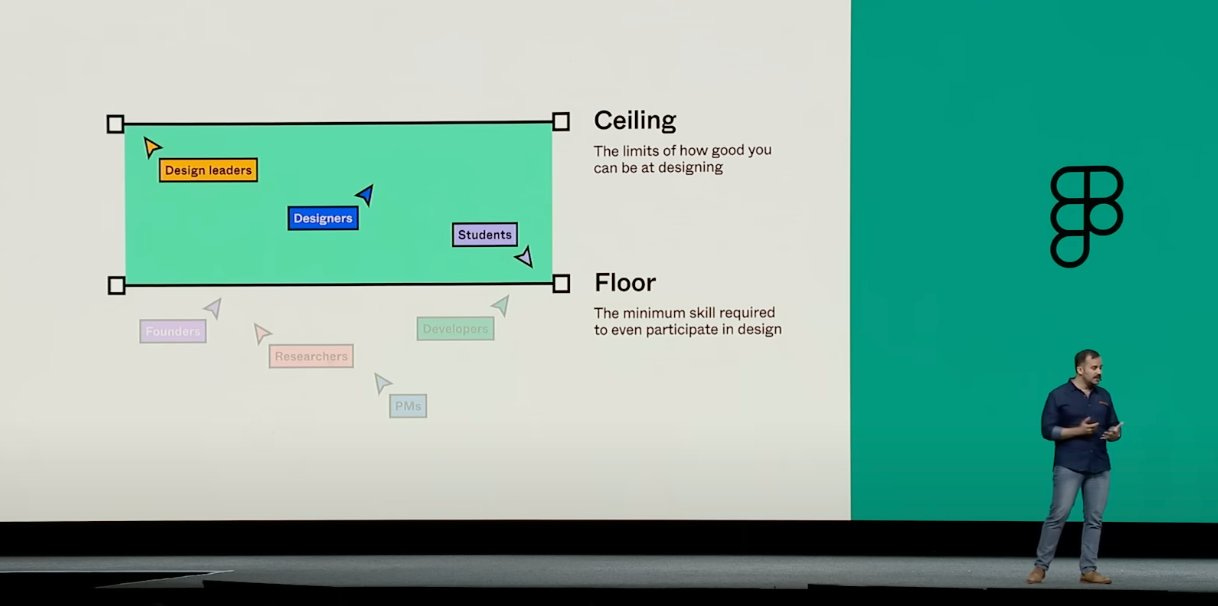

My personal takeaway is that the majority of the work AI can do successfully is what humans dislike doing anyway. Writing boilerplate code, looking up docs, taking repetitive actions, etc. These are usually boring tasks with little creativity, but with a lot of structure. This is the strongest arguments as to why LLMs, especially for code, are more beneficial to senior employees: if you can get the boring stuff out of the way, there's a lot more value you can generate. This is less and less true as you go entry level jobs which are mostly boring and repetitive tasks. The best language for this comes from Noah Levin, VP of Design at Figma:

Nicholas argues both sides at ~21:34 in the pod.

A New Approach to LLM Benchmarks

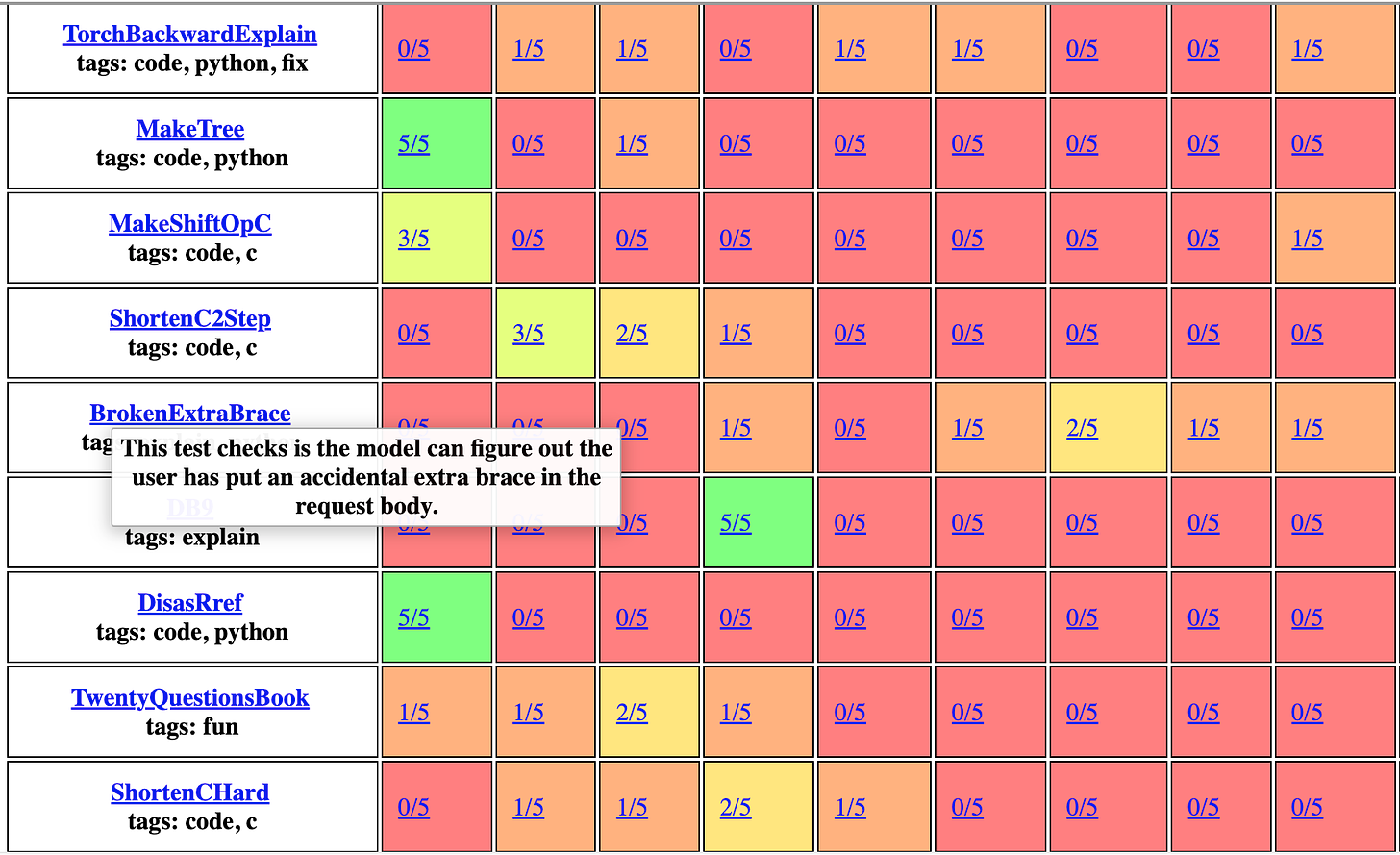

We recently did a Benchmarks 201 episode, a follow up to our original Benchmarks 101, and some of the issues have stayed the same. Notably, there's a big discrepancy between what benchmarks like MMLU test, and what the models are used for. Carlini created his own domain-specific language for writing personalized LLM benchmarks. The idea is simple but powerful:

Take tasks you've actually needed AI for in the past.

Turn them into benchmark tests.

Use these to evaluate new models based on your specific needs.

It can represent very complex tasks, from a single code generation to drawing a US flag using C:

"Write hello world in python" >> LLMRun() >> PythonRun() >> SubstringEvaluator("hello world")"Write a C program that draws an american flag to stdout." >> LLMRun() >> CRun() >> \ VisionLLMRun("What flag is shown in this image?") >> \ (SubstringEvaluator("United States") | SubstringEvaluator("USA")))He published a full benchmark in his blog post; some of the HumanEval-like tasks are pretty easy, but there are a ton of benchmarks that completely fail across all models.

This approach solves a few problems:

It measures what's actually useful to you, not abstract capabilities.

It's harder for model creators to "game" your specific benchmark, a problem that has plagued standardized tests.

It gives you a concrete way to decide if a new model is worth switching to, similar to how developers might run benchmarks before adopting a new library or framework.

Carlini argues that if even a small percentage of AI users created personal benchmarks, we'd have a much better picture of model capabilities in practice. So get to forking the repo!

AI Security

While much of the AI security discussion focuses on either prompt jailbreaks (episode coming soon!) or existential risks, Carlini's research targets the space in between. Some highlights from his recent work:

LAION 400M data poisoning: By buying expired domains referenced in the dataset, Carlini's team could inject arbitrary images into models trained on LAION 400M. You can read the paper "Poisoning Web-Scale Training Datasets is Practical", for all the details. This is a great example of expanding the scope beyond the model itself, and looking at the whole system and how ti can become vulnerable.

Stealing model weights: They demonstrated how to extract parts of production language models (like OpenAI's) through careful API queries. This research, "Extracting Training Data from Large Language Models", shows that even black-box access can leak sensitive information.

Extracting training data: In some cases, they found ways to make models regurgitate verbatim snippets from their training data. Carlini and Milad Nasr wrote a paper on this as well: Scalable Extraction of Training Data from (Production) Language Models. They also think this might be applicable to extracting RAG results from a generation.

These aren't just theoretical attacks. They've led to real changes in how companies like OpenAI design their APIs and handle data. If you really miss logit_bias and logit results by token, you can blame Nicholas :)

We had a ton of fun also chatting about things like Conway's Game of Life, how much data can fit in a piece of paper, and porting Doom to Javascript. Enjoy!

Full Video Podcast

Show Notes

Timestamps

[00:00:00] Intro song from Suno

[00:03:44] Why Nicholas writes

[00:04:39] The Game of Life

[00:07:37] "How I Use AI" blog post origin story

[00:10:44] Do we need software engineering agents?

[00:13:33] Using AI to kickstart a project

[00:16:38] Ephemeral software

[00:19:57] Using AI to accelerate research

[00:23:54] Experts vs non-expert users as beneficiaries of AI

[00:26:02] Research on generating less secure code with LLMs.

[00:29:22] Learning and explaining code with AI

[00:32:12] AGI speculations?

[00:34:50] Distributing content without social media

[00:37:39] How much data do you think you can put on a single piece of paper?

[00:39:37] Building personal AI benchmarks

[00:45:04] Evolution of prompt engineering and its relevance

[00:48:06] Model vs task benchmarking

[00:54:14] Poisoning LAION 400M through expired domains

[00:57:38] Stealing OpenAI models from their API

[01:03:29] Data stealing and recovering training data from models

[01:05:30] Finding motivation in your work

Transcript

Alessio [00:00:00]: Hey everyone, welcome to the Latent Space podcast. This is Alessio, partner and CTO-in-Residence at Decibel Partners, and I'm joined by my co-host Swyx, founder of Smol AI.

Swyx [00:00:12]: Hey, and today we're in the in-person studio, which Alessio has gorgeously set up for us, with Nicholas Carlini. Welcome. Thank you. You're a research scientist at DeepMind. You work at the intersection of machine learning and computer security. You got your PhD from Berkeley in 2018, and also your BA from Berkeley as well. And mostly we're here to talk about your blogs, because you are so generous in just writing up what you know. Well, actually, why do you write?

Nicholas [00:00:41]: Because I like, I feel like it's fun to share what you've done. I don't like writing, sufficiently didn't like writing, I almost didn't do a PhD, because I knew how much writing was involved in writing papers. I was terrible at writing when I was younger. I do like the remedial writing classes when I was in university, because I was really bad at it. So I don't actually enjoy, I still don't enjoy the act of writing. But I feel like it is useful to share what you're doing, and I like being able to talk about the things that I'm doing that I think are fun. And so I write because I think I want to have something to say, not because I enjoy the act of writing.

Swyx [00:01:14]: But yeah. It's a tool for thought, as they often say. Is there any sort of backgrounds or thing that people should know about you as a person? Yeah.

Nicholas [00:01:23]: So I tend to focus on, like you said, I do security work, I try to like attacking things and I want to do like high quality security research. And that's mostly what I spend my actual time trying to be productive members of society doing that. But then I get distracted by things, and I just like, you know, working on random fun projects. Like a Doom clone in JavaScript.

Swyx [00:01:44]: Yes.

Nicholas [00:01:45]: Like that. Or, you know, I've done a number of things that have absolutely no utility. But are fun things to have done. And so it's interesting to say, like, you should work on fun things that just are interesting, even if they're not useful in any real way. And so that's what I tend to put up there is after I have completed something I think is fun, or if I think it's sufficiently interesting, write something down there.

Alessio [00:02:09]: Before we go into like AI, LLMs and whatnot, why are you obsessed with the game of life? So you built multiplexing circuits in the game of life, which is mind boggling. So where did that come from? And then how do you go from just clicking boxes on the UI web version to like building multiplexing circuits?

Nicholas [00:02:29]: I like Turing completeness. The definition of Turing completeness is a computer that can run anything, essentially. And the game of life, Conway's game of life is a very simple cellular 2D automata where you have cells that are either on or off. And a cell becomes on if in the previous generation some configuration holds true and off otherwise. It turns out there's a proof that the game of life is Turing complete, that you can run any program in principle using Conway's game of life. I don't know. And so you can, therefore someone should. And so I wanted to do it. Some other people have done some similar things, but I got obsessed into like, if you're going to try and make it work, like we already know it's possible in theory. I want to try and like actually make something I can run on my computer, like a real computer I can run. And so yeah, I've been going on this rabbit hole of trying to make a CPU that I can run semi real time on the game of life. And I have been making some reasonable progress there. And yeah, but you know, Turing completeness is just like a very fun trap you can go down. A while ago, as part of a research paper, I was able to show that in C, if you call into printf, it's Turing complete. Like printf, you know, like, which like, you know, you can print numbers or whatever, right?

Swyx [00:03:39]: Yeah, but there should be no like control flow stuff.

Nicholas [00:03:42]: Because printf has a percent n specifier that lets you write an arbitrary amount of data to an arbitrary location. And the printf format specifier has an index into where it is in the loop that is in memory. So you can overwrite the location of where printf is currently indexing using percent n. So you can get loops, you can get conditionals, and you can get arbitrary data rates again. So we sort of have another Turing complete language using printf, which again, like this has essentially zero practical utility, but like, it's just, I feel like a lot of people get into programming because they enjoy the art of doing these things. And then they go work on developing some software application and lose all joy with the boys. And I want to still have joy in doing these things. And so on occasion, I try to stop doing productive, meaningful things and just like, what's a fun thing that we can do and try and make that happen.

Alessio [00:04:39]: Awesome. So you've been kind of like a pioneer in the AI security space. You've done a lot of talks starting back in 2018. We'll kind of leave that to the end because I know the security part is, there's maybe a smaller audience, but it's a very intense audience. So I think that'll be fun. But everybody in our Discord started posting your how I use AI blog post and we were like, we should get Carlini on the podcast. And then you were so nice to just, yeah, and then I sent you an email and you're like, okay, I'll come.

Swyx [00:05:07]: And I was like, oh, I thought that would be harder.

Alessio [00:05:10]: I think there's, as you said in the blog posts, a lot of misunderstanding about what LLMs can actually be used for. What are they useful at? What are they not good at? And whether or not it's even worth arguing what they're not good at, because they're obviously not. So if you cannot count the R's in a word, they're like, it's just not what it does. So how painful was it to write such a long post, given that you just said that you don't like to write? Yeah. And then we can kind of run through the things, but maybe just talk about the motivation, why you thought it was important to do it.

Nicholas [00:05:39]: Yeah. So I wanted to do this because I feel like most people who write about language models being good or bad, some underlying message of like, you know, they have their camp and their camp is like, AI is bad or AI is good or whatever. And they like, they spin whatever they're going to say according to their ideology. And they don't actually just look at what is true in the world. So I've read a lot of things where people say how amazing they are and how all programmers are going to be obsolete by 2024. And I've read a lot of things where people who say like, they can't do anything useful at all. And, you know, like, they're just like, it's only the people who've come off of, you know, blockchain crypto stuff and are here to like make another quick buck and move on. And I don't really agree with either of these. And I'm not someone who cares really one way or the other how these things go. And so I wanted to write something that just says like, look, like, let's sort of ground reality and what we can actually do with these things. Because my actual research is in like security and showing that these models have lots of problems. Like this is like my day to day job is saying like, we probably shouldn't be using these in lots of cases. I thought I could have a little bit of credibility of in saying, it is true. They have lots of problems. We maybe shouldn't be deploying them lots of situations. And still, they are also useful. And that is the like, the bit that I wanted to get across is to say, I'm not here to try and sell you on anything. I just think that they're useful for the kinds of work that I do. And hopefully, some people would listen. And it turned out that a lot more people liked it than I thought. But yeah, that was the motivation behind why I wanted to write this.

Alessio [00:07:15]: So you had about a dozen sections of like how you actually use AI. Maybe we can just kind of run through them all. And then maybe the ones where you have extra commentary to add, we can... Sure.

Nicholas [00:07:27]: Yeah, yeah. I didn't put as much thought into this as maybe was deserved. I probably spent, I don't know, definitely less than 10 hours putting this together.

Swyx [00:07:38]: Wow.

Alessio [00:07:39]: It took me close to that to do a podcast episode. So that's pretty impressive.

Nicholas [00:07:43]: Yeah. I wrote it in one pass. I've gotten a number of emails of like, you got this editing thing wrong, you got this sort of other thing wrong. It's like, I haven't just haven't looked at it. I tend to try it. I feel like I still don't like writing. And so because of this, the way I tend to treat this is like, I will put it together into the best format that I can at a time, and then put it on the internet, and then never change it. And this is an aspect of like the research side of me is like, once a paper is published, like it is done as an artifact that exists in the world. I could forever edit the very first thing I ever put to make it the most perfect version of what it is, and I would do nothing else. And so I feel like I find it useful to be like, this is the artifact, I will spend some certain amount of hours on it, which is what I think it is worth. And then I will just...

Swyx [00:08:22]: Yeah.

Nicholas [00:08:23]: Timeboxing.

Alessio [00:08:24]: Yeah. Stop. Yeah. Okay. We just recorded an episode with the founder of Cosine, which is like an AI software engineer colleague. You said it took you 30,000 words to get GPT-4 to build you the, can GPT-4 solve this kind of like app. Where are we in the spectrum where chat GPT is all you need to actually build something versus I need a full on agent that does everything for me?

Nicholas [00:08:46]: Yeah. Okay. So this was an... So I built a web app last year sometime that was just like a fun demo where you can guess if you can predict whether or not GPT-4 at the time could solve a given task. This is, as far as web apps go, very straightforward. You need basic HTML, CSS, you have a little slider that moves, you have a button, sort of animate the text coming to the screen. The reason people are going here is not because they want to see my wonderful HTML, right? I used to know how to do modern HTML in 2007, 2008. I was very good at fighting with IE6 and these kinds of things. I knew how to do that. I have no longer had to build any web app stuff in the meantime, which means that I know how everything works, but I don't know any of the new... Flexbox is new to me. Flexbox is like 10 years old at this point, but it's just amazing being able to go to the model and just say, write me this thing and it will give me all of the boilerplate that I need to get going. Of course it's imperfect. It's not going to get you the right answer, and it doesn't do anything that's complicated right now, but it gets you to the point where the only remaining work that needs to be done is the interesting hard part for me, the actual novel part. Even the current models, I think, are entirely good enough at doing this kind of thing, that they're very useful. It may be the case that if you had something, like you were saying, a smarter agent that could debug problems by itself, that might be even more useful. Currently though, make a model into an agent by just copying and pasting error messages for the most part. That's what I do, is you run it and it gives you some code that doesn't work, and either I'll fix the code, or it will give me buggy code and I won't know how to fix it, and I'll just copy and paste the error message and say, it tells me this. What do I do? And it will just tell me how to fix it. You can't trust these things blindly, but I feel like most people on the internet already understand that things on the internet, you can't trust blindly. And so this is not like a big mental shift you have to go through to understand that it is possible to read something and find it useful, even if it is not completely perfect in its output.

Swyx [00:10:54]: It's very human-like in that sense. It's the same ring of trust, I kind of think about it that way, if you had trust levels.

Alessio [00:11:03]: And there's maybe a couple that tie together. So there was like, to make applications, and then there's to get started, which is a similar you know, kickstart, maybe like a project that you know the LLM cannot solve. It's kind of how you think about it.

Nicholas [00:11:15]: Yeah. So for getting started on things is one of the cases where I think it's really great for some of these things, where I sort of use it as a personalized, help me use this technology I've never used before. So for example, I had never used Docker before January. I know what Docker is. Lucky you. Yeah, like I'm a computer security person, like I sort of, I have read lots of papers on, you know, all the technology behind how these things work. You know, I know all the exploits on them, I've done some of these things, but I had never actually used Docker. But I wanted it to be able to, I could run the outputs of language model stuff in some controlled contained environment, which I know is the right application. So I just ask it like, I want to use Docker to do this thing, like, tell me how to run a Python program in a Docker container. And it like gives me a thing. I'm like, step back. You said Docker compose, I do not know what this word Docker compose is. Is this Docker? Help me. And like, you'll sort of tell me all of these things. And I'm sure there's this knowledge that's out there on the internet, like this is not some groundbreaking thing that I'm doing, but I just wanted it as a small piece of one thing I was working on. And I didn't want to learn Docker from first principles. Like I, at some point, if I need it, I can do that. Like I have the background that I can make that happen. But what I wanted to do was, was thing one. And it's very easy to get bogged down in the details of this other thing that helps you accomplish your end goal. And I just want to like, tell me enough about Docker so I can do this particular thing. And I can check that it's doing the safe thing. I sort of know enough about that from, you know, my other background. And so I can just have the model help teach me exactly the one thing I want to know and nothing more. I don't need to worry about other things that the writer of this thinks is important that actually isn't. Like I can just like stop the conversation and say, no, boring to me. Explain this detail. I don't understand. I think that's what that was very useful for me. It would have taken me, you know, several hours to figure out some things that take 10 minutes if you could just ask exactly the question you want the answer to.

Alessio [00:13:05]: Have you had any issues with like newer tools? Have you felt any meaningful kind of like a cutoff day where like there's not enough data on the internet or? I'm sure that the answer to this is yes.

Nicholas [00:13:16]: But I tend to just not use most of these things. Like I feel like this is like the significant way in which I use machine learning models is probably very different than most people is that I'm a researcher and I get to pick what tools that I use and most of the things that I work on are fairly small projects. And so I can, I can entirely see how someone who is in a big giant company where they have their own proprietary legacy code base of a hundred million lines of code or whatever and like you just might not be able to use things the same way that I do. I still think there are lots of use cases there that are entirely reasonable that are not the same ones that I've put down. But I wanted to talk about what I have personal experience in being able to say is useful. And I would like it very much if someone who is in one of these environments would be able to describe the ways in which they find current models useful to them. And not, you know, philosophize on what someone else might be able to find useful, but actually say like, here are real things that I have done that I found useful for me.

Swyx [00:14:08]: Yeah, this is what I often do to encourage people to write more, to share their experiences because they often fear being attacked on the internet. But you are the ultimate authority on how you use things and there's this objectively true. So they cannot be debated. One thing that people are very excited about is the concept of ephemeral software or like personal software. This use case in particular basically lowers the activation energy for creating software, which I like as a vision. I don't think I have taken as much advantage of it as I could. I feel guilty about that. But also, we're trending towards there.

Nicholas [00:14:47]: Yeah. No, I mean, I do think that this is a direction that is exciting to me. One of the things I wrote that was like, a lot of the ways that I use these models are for one-off things that I just need to happen that I'm going to throw away in five minutes. And you can.

Swyx [00:15:01]: Yeah, exactly.

Nicholas [00:15:02]: Right. It's like the kind of thing where it would not have been worth it for me to have spent 45 minutes writing this, because I don't need the answer that badly. But if it will only take me five minutes, then I'll just figure it out, run the program and then get it right. And if it turns out that you ask the thing, it doesn't give you the right answer. Well, I didn't actually need the answer that badly in the first place. Like either I can decide to dedicate the 45 minutes or I cannot, but like the cost of doing it is fairly low. You see what the model can do. And if it can't, then, okay, when you're using these models, if you're getting the answer you want always, it means you're not asking them hard enough questions.

Swyx [00:15:35]: Say more.

Nicholas [00:15:37]: Lots of people only use them for very small particular use cases and like it always does the thing that they want. Yeah.

Swyx [00:15:43]: Like they use it like a search engine.

Nicholas [00:15:44]: Yeah. Or like one particular case. And if you're finding that when you're using these, it's always giving you the answer that you want, then probably it has more capabilities than you're actually using. And so I oftentimes try when I have something that I'm curious about to just feed into the model and be like, well, maybe it's just solved my problem for me. You know, most of the time it doesn't, but like on occasion, it's like, it's done things that would have taken me, you know, a couple hours that it's been great and just like solved everything immediately. And if it doesn't, then it's usually easier to verify whether or not the answer is correct than to have written in the first place. And so you check, you're like, well, that's just, you're entirely misguided. Nothing here is right. It's just like, I'm not going to do this. I'm going to go write it myself or whatever.

Alessio [00:16:21]: Even for non-tech, I had to fix my irrigation system. I had an old irrigation system. I didn't know how I worked to program it. I took a photo, I sent it to Claude and it's like, oh yeah, that's like the RT 900. This is exactly, I was like, oh wow, you know, you know, a lot of stuff.

Swyx [00:16:34]: Was it right?

Alessio [00:16:35]: Yeah, it was right.

Swyx [00:16:36]: It worked. Did you compare with OpenAI?

Alessio [00:16:38]: No, I canceled my OpenAI subscription, so I'm a Claude boy. Do you have a way to think about this like one-offs software thing? One way I talk to people about it is like LLMs are kind of converging to like semantic serverless functions, you know, like you can say something and like it can run the function in a way and then that's it. It just kind of dies there. Do you have a mental model to just think about how long it should live for and like anything like that?

Nicholas [00:17:02]: I don't think I have anything interesting to say here, no. I will take whatever tools are available in front of me and try and see if I can use them in meaningful ways. And if they're helpful, then great. If they're not, then fine. And like, you know, there are lots of people that I'm very excited about seeing all these people who are trying to make better applications that use these or all these kinds of things. And I think that's amazing. I would like to see more of it, but I do not spend my time thinking about how to make this any better.

Alessio [00:17:27]: What's the most underrated thing in the list? I know there's like simplified code, solving boring tasks, or maybe is there something that you forgot to add that you want to throw in there?

Nicholas [00:17:37]: I mean, so in the list, I only put things that people could look at and go, I understand how this solved my problem. I didn't want to put things where the model was very useful to me, but it would not be clear to someone else that it was actually useful. So for example, one of the things that I use it a lot for is debugging errors. But the errors that I have are very much not the errors that anyone else in the world will have. And in order to understand whether or not the solution was right, you just have to trust me on it. Because, you know, like I got my machine in a state that like CUDA was not talking to whatever some other thing, the versions were mismatched, something, something, something, and everything was broken. And like, I could figure it out with interaction with the model, and it gave it like told me the steps I needed to take. But at the end of the day, when you look at the conversation, you just have to trust me that it worked. And I didn't want to write things online that were this, like, you have to trust me that what I'm saying. I want everything that I said to like have evidence that like, here's the conversation, you can go and check whether or not this actually solved the task as I said that the model does. Because a lot of people I feel like say, I used a model to solve this very complicated task. And what they mean is the model did 10%, and I did the other 90% or something, I wanted everything to be verifiable. And so one of the biggest use cases for me, I didn't describe even at all, because it's not the kind of thing that other people could have verified by themselves. So that maybe is like, one of the things that I wish I maybe had said a little bit more about, and just stated that the way that this is done, because I feel like that this didn't come across quite as well. But yeah, of the things that I talked about, the thing that I think is most underrated is the ability of it to solve the uninteresting parts of problems for me right now, where people always say, this is one of the biggest arguments that I don't understand why people say is, the model can only do things that people have done before. Therefore, the model is not going to be helpful in doing new research or like discovering new things. And as someone whose day job is to do new things, like what is research? Research is doing something literally no one else in the world has ever done before. So this is what I do every single day, 90% of this is not doing something new, 90% of this is doing things a million people have done before, and then a little bit of something that was new. There's a reason why we say we stand on the shoulders of giants. It's true. Almost everything that I do is something that's been done many, many times before. And that is the piece that can be automated. Even if the thing that I'm doing as a whole is new, it is almost certainly the case that the small pieces that build up to it are not. And a number of people who use these models, I feel like expect that they can either solve the entire task or none of the task. But now I find myself very often, even when doing something very new and very hard, having models write the easy parts for me. And the reason I think this is so valuable, everyone who programs understands this, like you're currently trying to solve some problem and then you get distracted. And whatever the case may be, someone comes and talks to you, you have to go look up something online, whatever it is. You lose a lot of time to that. And one of the ways we currently don't think about being distracted is you're solving some hard problem and you realize you need a helper function that does X, where X is like, it's a known algorithm. Any person in the world, you say like, give me the algorithm that, have a dense graph or a sparse graph, I need to make it dense. You can do this by doing some matrix multiplies. It's like, this is a solved problem. I knew how to do this 15 years ago, but it distracts me from the problem I'm thinking about in my mind. I needed this done. And so instead of using my mental capacity and solving that problem and then coming back to the problem I was originally trying to solve, you could just ask model, please solve this problem for me. It gives you the answer. You run it. You can check that it works very, very quickly. And now you go back to solving the problem without having lost all the mental state. And I feel like this is one of the things that's been very useful for me.

Swyx [00:21:34]: And in terms of this concept of expert users versus non-expert users, floors versus ceilings, you had some strong opinion here that like, basically it actually is more beneficial for non-experts.

Nicholas [00:21:46]: Yeah, I don't know. I think it could go either way. Let me give you the argument for both of these. Yes. So I can only speak on the expert user behalf because I've been doing computers for a long time. And so yeah, the cases where it's useful for me are exactly these cases where I can check the output. I know, and anything the model could do, I could have done. I could have done better. I can check every single thing that the model is doing and make sure it's correct in every way. And so I can only speak and say, definitely it's been useful for me. But I also see a world in which this could be very useful for the kinds of people who do not have this knowledge, with caveats, because I'm not one of these people. I don't have this direct experience. But one of these big ways that I can see this is for things that you can check fairly easily, someone who could never have asked or have written a program themselves to do a certain task could just ask for the program that does the thing. And you know, some of the times it won't get it right. But some of the times it will, and they'll be able to have the thing in front of them that they just couldn't have done before. And we see a lot of people trying to do applications for this, like integrating language models into spreadsheets. Spreadsheets run the world. And there are some people who know how to do all the complicated spreadsheet equations and various things, and other people who don't, who just use the spreadsheet program but just manually do all of the things one by one by one by one. And this is a case where you could have a model that could try and give you a solution. And as long as the person is rigorous in testing that the solution does actually the correct thing, and this is the part that I'm worried about most, you know, I think depending on these systems in ways that we shouldn't, like this is what my research says, my research says is entirely on this, like, you probably shouldn't trust these models to do the things in adversarial situations, like, I understand this very deeply. And so I think that it's possible for people who don't have this knowledge to make use of these tools in ways, but I'm worried that it might end up in a world where people just blindly trust them, deploy them in situations that they probably shouldn't, and then someone like me gets to come along and just break everything because everything is terrible. And so I am very, very worried about that being the case, but I think if done carefully it is possible that these could be very useful.

Swyx [00:23:54]: Yeah, there is some research out there that shows that when people use LLMs to generate code, they do generate less secure code.

Nicholas [00:24:02]: Yeah, Dan Bonet has a nice paper on this. There are a bunch of papers that touch on exactly this.

Swyx [00:24:07]: My slight issue is, you know, is there an agenda here?

Nicholas [00:24:10]: I mean, okay, yeah, Dan Bonet, at least the one they have, like, I fully trust everything that sort of.

Swyx [00:24:15]: Sorry, I don't know who Dan is.

Swyx [00:24:17]: He's a professor at Stanford. Yeah, he and some students have some things on this. Yeah, there's a number. I agree that a lot of the stuff feels like people have an agenda behind it. There are some that don't, and I trust them to have done the right thing. I also think, even on this though, we have to be careful because the argument, whenever someone says x is true about language models, you should always append the suffix for current models because I'll be the first to admit I was one of the people who was very much on the opinion that these language models are fun toys and are going to have absolutely no practical utility. If you had asked me this, let's say, in 2020, I still would have said the same thing. After I had seen GPT-2, I had written a couple of papers studying GPT-2 very carefully. I still would have told you these things are toys. And when I first read the RLHF paper and the instruction tuning paper, I was like, nope, this is this thing that these weird AI people are doing. They're trying to make some analogies to people that makes no sense. It's just like, I don't even care to read it. I saw what it was about and just didn't even look at it. I was obviously wrong. These things can be useful. And I feel like a lot of people had the same mentality that I did and decided not to change their mind. And I feel like this is the thing that I want people to be careful about. I want them to at least know what is true about the world so that they can then see that maybe they should reconsider some of the opinions that they had from four or five years ago that may just not be true about today's models.

Swyx [00:25:47]: Specifically because you brought up spreadsheets, I want to share my personal experience because I think Google has done a really good job that people don't know about, which is if you use Google Sheets, Gemini is integrated inside of Google Sheets and it helps you write formulas. Great.

Nicholas [00:26:00]: That's news to me.

Swyx [00:26:01]: Right? They don't maybe do a good job. Unless you watch Google I.O., there was no other opportunity to learn that Gemini is now in your Google Sheets. And so I just don't write formulas manually anymore. It just prompts Gemini to do it for me. And it does it.

Nicholas [00:26:15]: One of the problems that these machine learning models have is a discoverability problem. I think this will be figured out. I mean, it's the same problem that you have with any assistant. You're given a blank box and you're like, what do I do with it? I think this is great. More of these things, it would be good for them to exist. I want them to exist in ways that we can actually make sure that they're done correctly. I don't want to just have them be pushed into more and more things just blindly. I feel like lots of people, there are far too many X plus AI, where X is like arbitrary thing in the world that has nothing to do with it and could not be benefited at all. And they're just doing it because they want to use the word. And I don't want that to happen.

Swyx [00:26:58]: You don't want an AI fridge?

Nicholas [00:27:00]: No. Yes. I do not want my fridge on the internet.

Swyx [00:27:03]: I do not want... Okay.

Nicholas [00:27:05]: Anyway, let's not go down that rabbit hole. I understand why some of that happens, because people want to sell things or whatever. But I feel like a lot of people see that and then they write off everything as a result of it. And I just want to say, there are allowed to be people who are trying to do things that don't make any sense. Just ignore them. Do the things that make sense.

Alessio [00:27:22]: Another chunk of use cases was learning. So both explaining code, being an API reference, all of these different things. Any suggestions on how to go at it? I feel like one thing is generate code and then explain to me. One way is just tell me about this technology. Another thing is like, hey, I read this online, kind of help me understand it. Any best practices on getting the most out of it?

Swyx [00:27:47]: Yeah.

Nicholas [00:27:47]: I don't know if I have best practices. I have how I use them.

Swyx [00:27:51]: Yeah.

Nicholas [00:27:51]: I find it very useful for cases where I understand the underlying ideas, but I have never used

Swyx [00:27:59]: them in this way before.

Nicholas [00:28:00]: I know what I'm looking for, but I just don't know how to get there. And so yeah, as an API reference is a great example. The tool everyone always picks on is like FFmpeg. No one in the world knows the command line arguments to do what they want. They're like, make the thing faster. I want lower bitrate, like dash V. Once you tell me what the answer is, I can check. This is one of these things where it's great for these kinds of things. Or in other cases, things where I don't really care that the answer is 100% correct. So for example, I do a lot of security work. Most of security work is reading some code you've never seen before and finding out which pieces of the code are actually important. Because, you know, most of the program isn't actually do anything to do with security. It has, you know, the display piece or the other piece or whatever. And like, you just, you would only ignore all of that. So one very fun use of models is to like, just have it describe all the functions and just skim it and be like, wait, which ones look like approximately the right things to look at? Because otherwise, what are you going to do? You're going to have to read them all manually. And when you're reading them manually, you're going to skim the function anyway, and not just figure out what's going on perfectly. Like you already know that when you're going to read these things, what you're going to try and do is figure out roughly what's going on. Then you'll delve into the details. This is a great way of just doing that, but faster, because it will abstract most of what

Swyx [00:29:21]: is right.

Nicholas [00:29:21]: It's going to be wrong some of the time. I don't care.

Swyx [00:29:23]: I would have been wrong too.

Nicholas [00:29:24]: And as long as you treat it with this way, I think it's great. And so like one of the particular use cases I have in the thing is decompiling binaries, where oftentimes people will release a binary. They won't give you the source code. And you want to figure out how to attack it. And so one thing you could do is you could try and run some kind of decompiler. It turns out for the thing that I wanted, none existed. And so I spent too many hours doing it by hand. Before I first thought, why am I doing this? I should just check if the model could do it for me. And it turns out that it can. And it can turn the compiled source code, which is impossible for any human to understand, into the Python code that is entirely reasonable to understand. And it doesn't run. It has a bunch of problems. But it's so much nicer that it's immediately a win for me. I can just figure out approximately where I should be looking, and then spend all of my time doing that by hand. And again, you get a big win there.

Swyx [00:30:12]: So I fully agree with all those use cases, especially for you as a security researcher and having to dive into multiple things. I imagine that's super helpful. I do think we want to move to your other blog post. But you ended your post with a little bit of a teaser about your next post and your speculations. What are you thinking about?

Nicholas [00:30:34]: So I want to write something. And I will do that at some point when I have time, maybe after I'm done writing my current papers for ICLR or something, where I want to talk about some thoughts I have for where language models are going in the near-term future. The reason why I want to talk about this is because, again, I feel like the discussion tends to be people who are either very much AGI by 2027, or

Swyx [00:30:55]: always five years away, or are going to make statements of the form,

Nicholas [00:31:00]: you know, LLMs are the wrong path, and we should be abandoning this, and we should be doing something else instead. And again, I feel like people tend to look at this and see these two polarizing options and go, well, those obviously are both very far extremes. Like, how do I actually, like, what's a more nuanced take here? And so I have some opinions about this that I want to put down, just saying, you know, I have wide margins of error. I think you should too. If you would say there's a 0% chance that something, you know, the models will get very, very good in the next five years, you're probably wrong. If you're going to say there's a 100% chance that in the next five years, then you're probably wrong. And like, to be fair, most of the people, if you read behind the headlines, actually say something like this. But it's very hard to get clicks on the internet of like, some things may be good in the future. Like, everyone wants like, you know, a very, like, nothing is going to be good. This is entirely wrong. It's going to be amazing. You know, like, they want to see this. I want people who have negative reactions to these kinds of extreme views to be able to at least say, like, to tell them, there is something real here. It may not solve all of our problems, but it's probably going to get better. I don't know by how much. And that's basically what I want to say. And then at some point, I'll talk about the safety and security things as a result of this. Because the way in which security intersects with these things depends a lot in exactly how people use these tools. You know, if it turns out to be the case that these models get to be truly amazing and can solve, you know, tasks completely autonomously, that's a very different security world to be living in than if there's always a human in the loop. And the types of security questions I would want to ask would be very different. And so I think, you know, in some very large part, understanding what the future will look like a couple of years ahead of time is helpful for figuring out which problems, as a security person, I want to solve now. You mentioned getting clicks on the internet,

Alessio [00:32:50]: but you don't even have, like, an ex-account or anything. How do you get people to read your stuff? What's your distribution strategy? Because this post was popping up everywhere. And then people on Twitter were like, Nicholas Garlini wrote this. Like, what's his handle? It's like, he doesn't have it. It's like, how did you find it? What's the story?

Nicholas [00:33:07]: So I have an RSS feed and an email list. And that's it. I don't like most social media things. On principle, I feel like they have some harms. As a person, I have a problem when people say things that are wrong on the internet. And I would get nothing done if I would have a Twitter. I would spend all of my time correcting people and getting into fights. And so I feel like it is just useful for me for this not to be an option. I tend to just post things online. Yeah, it's a very good question. I don't know how people find it. I feel like for some things that I write, other people think it resonates with them. And then they put it on Twitter. And...

Swyx [00:33:43]: Hacker News as well.

Nicholas [00:33:44]: Sure, yeah. I am... Because my day job is doing research, I get no value for having this be picked up. There's no whatever. I don't need to be someone who has to have this other thing to give talks. And so I feel like I can just say what I want to say. And if people find it useful, then they'll share it widely. You know, this one went pretty wide. I wrote a thing, whatever, sometime late last year, about how to recover data off of an Apple profile drive from 1980. This probably got, I think, like 1000x less views than this. But I don't care. Like, that's not why I'm doing this. Like, this is the benefit of having a thing that I actually care about, which is my research. I would care much more if that didn't get seen. This is like a thing that I write because I have some thoughts that I just want to put down.

Swyx [00:34:32]: Yeah. I think it's the long form thoughtfulness and authenticity that is sadly lacking sometimes in modern discourse that makes it attractive. And I think now you have a little bit of a brand of you are an independent thinker, writer, person, that people are tuned in to pay attention to whatever is next coming.

Nicholas [00:34:52]: Yeah, I mean, this kind of worries me a little bit. I don't like whenever I have a popular thing that like, and then I write another thing, which is like entirely unrelated. Like, I don't, I don't... You should actually just throw people off right now.

Swyx [00:35:01]: Exactly.

Nicholas [00:35:02]: I'm trying to figure out, like, I need to put something else online. So, like, the last two or three things I've done in a row have been, like, actually, like, things that people should care about.

Swyx [00:35:10]: Yes. So, I have a couple of things.

Nicholas [00:35:11]: I'm trying to figure out which one do I put online to just, like, cull the list of people who have subscribed to my email.

Swyx [00:35:16]: And so, like, tell them, like,

Nicholas [00:35:16]: no, like, what you're here for is not informed, well-thought-through takes. Like, what you're here for is whatever I want to talk about. And if you're not up for that, then, like, you know, go away. Like, this is not what I want out of my personal website.

Swyx [00:35:27]: So, like, here's, like, top 10 enemies or something.

Alessio [00:35:30]: What's the next project you're going to work on that is completely unrelated to research LLMs? Or what games do you want to port into the browser next?

Swyx [00:35:39]: Okay. Yeah.

Nicholas [00:35:39]: So, maybe.

Swyx [00:35:41]: Okay.

Nicholas [00:35:41]: Here's a fun question. How much data do you think you can put on a single piece of paper?

Swyx [00:35:47]: I mean, you can think about bits and atoms. Yeah.

Nicholas [00:35:49]: No, like, normal printer. Like, I gave you an office printer. How much data can you put on a piece of paper?

Alessio [00:35:54]: Can you re-decode it? So, like, you know, base 64A or whatever. Yeah, whatever you want.

Nicholas [00:35:59]: Like, you get normal off-the-shelf printer, off-the-shelf scanner. How much data?

Swyx [00:36:03]: I'll just throw out there. Like, 10 megabytes. That's enormous. I know.

Nicholas [00:36:07]: Yeah, that's a lot.

Swyx [00:36:10]: Really small fonts. That's my question.

Nicholas [00:36:12]: So, I have a thing. It does about a megabyte.

Swyx [00:36:14]: Yeah, okay.

Nicholas [00:36:14]: There you go. I was off by an order of magnitude.

Swyx [00:36:16]: Yeah, okay.

Nicholas [00:36:16]: So, in particular, it's about 1.44 megabytes. A floppy disk.

Swyx [00:36:21]: Yeah, exactly.

Nicholas [00:36:21]: So, this is supposed to be the title at some point. It's a floppy disk.

Swyx [00:36:24]: A paper is a floppy disk. Yeah.

Nicholas [00:36:25]: So, this is a little hard because, you know. So, you can do the math and you get 8.5 by 11. You can print at 300 by 300 DPI. And this gives you 2 megabytes. And so, every single pixel, you need to be able to recover up to like 90 plus percent. Like, 95 percent. Like, 99 point something percent accuracy. In order to be able to actually decode this off the paper. This is one of the things that I'm considering. I need to get a couple more things working for this. Where, you know, again, I'm running into some random problems. But this is probably, this will be one thing that I'm going to talk about. There's this contest called the International Obfuscated C-Code Contest, which is amazing. People try and write the most obfuscated C code that they can. Which is great. And I have a submission for that whenever they open up the next one for it. And I'll write about that submission. I have a very fun gate level emulation of an old CPU that runs like fully precisely. And it's a fun kind of thing. Yeah.

Swyx [00:37:20]: Interesting. Your comment about the piece of paper reminds me of when I was in college. And you would have like one cheat sheet that you could write. So, you have a formula, a theoretical limit for bits per inch. And, you know, that's how much I would squeeze in really, really small. Yeah, definitely.

Nicholas [00:37:36]: Okay.

Swyx [00:37:37]: We are also going to talk about your benchmarking. Because you released your own benchmark that got some attention, thanks to some friends on the internet. What's the story behind your own benchmark? Do you not trust the open source benchmarks? What's going on there?

Nicholas [00:37:51]: Okay. Benchmarks tell you how well the model solves the task the benchmark is designed to solve. For a long time, models were not useful. And so, the benchmark that you tracked was just something someone came up with, because you need to track something. All of deep learning exists because people tried to make models classify digits and classify images into a thousand classes. There is no one in the world who cares specifically about the problem of distinguishing between 300 breeds of dog for an image that's 224 or 224 pixels. And yet, like, this is what drove a lot of progress. And people did this not because they cared about this problem, because they wanted to just measure progress in some way. And a lot of benchmarks are of this flavor. You want to construct a task that is hard, and we will measure progress on this benchmark, not because we care about the problem per se, but because we know that progress on this is in some way correlated with making better models. And this is fine when you don't want to actually use the models that you have. But when you want to actually make use of them, it's important to find benchmarks that track with whether or not they're useful to you. And the thing that I was finding is that there would be model after model after model that was being released that would find some benchmark that they could claim state-of-the-art on and then say, therefore, ours is the best. And that wouldn't be helpful to me to know whether or not I should then switch to it. So the argument that I tried to lay out in this post is that more people should make benchmarks that are tailored to them. And so what I did is I wrote a domain-specific language that anyone can write for and say, you can take tasks that you have wanted models to solve for you, and you can put them into your benchmark that's the thing that you care about. And then when a new model comes out, you benchmark the model on the things that you care about. And you know that you care about them because you've actually asked for those answers before. And if the model scores well, then you know that for the kinds of things that you have asked models for in the past, it can solve these things well for you. This has been useful for me because when another model comes out, I can run it. I can see, does this solve the kinds of things that I care about? And sometimes the answer is yes, and sometimes the answer is no. And then I can decide whether or not I want to use that model or not. I don't want to say that existing benchmarks are not useful. They're very good at measuring the thing that they're designed to measure. But in many cases, what that's designed to measure is not actually the thing that I want to use it for. And I expect that the way that I want to use it is different the way that you want to use it. And I would just like more people to have these things out there in the world. And the final reason for this is, it is very easy. If you want to make a model good at some benchmark, to make it good at that benchmark, you can find the distribution of data that you need and train the model to be good on the distribution of data. And then you have your model that can solve this benchmark well. And by having a benchmark that is not very popular, you can be relatively certain that no one has tried to optimize their model for your benchmark.

Swyx [00:40:40]: And I would like this to be-

Nicholas [00:40:40]: So publishing your benchmark is a little bit-

Swyx [00:40:43]: Okay, sure.

Nicholas [00:40:43]: Contextualized. So my hope in doing this was not that people would use mine as theirs. My hope in doing this was that- You should make yours. Yes, you should make your benchmark. And if, for example, there were even a very small fraction of people, 0.1% of people who made a benchmark that was useful for them, this would still be hundreds of new benchmarks that- not want to make one myself, but I might want to- I might know the kinds of work that I do is a little bit like this person, a little bit like that person. I'll go check how it is on their benchmarks. And I'll see, roughly, I'll get a good sense of what's going on. Because the alternative is people just do this vibes-based evaluation thing, where you interact with the model five times, and you see if it worked on the kinds of things that you just like your toy questions. But five questions is a very low bit output from whether or not it works for this thing. And if you could just automate running it 100 questions for you, it's a much better evaluation. So that's why I did this.

Swyx [00:41:37]: Yeah, I like the idea of going through your chat history and actually pulling out real-life examples. I regret to say that I don't think my chat history is used as much these days, because I'm using Cursor, the native AI IDE. So your examples are all coding related. And the immediate question is, now that you've written the How I Use AI post, which is a little bit broader, are you able to translate all these things to evals? Are some things unevaluable?

Nicholas [00:42:03]: Right. A number of things that I do are harder to evaluate. So this is the problem with a benchmark, is you need some way to check whether or not the output was correct. And so all of the kinds of things that I can put into the benchmark are the kinds of things that you can check. You can check more things than you might have thought would be possible if you do a little bit of work on the back end. So for example, all of the code that I have the model write, it runs the code and sees whether the answer is the correct answer. Or in some cases, it runs the code, feeds the output to another language model, and the language model judges was the output correct. And again, is using a language model to judge here perfect? No. But like, what's the alternative? The alternative is to not do it. And what I care about is just, is this thing broadly useful for the kinds of questions that I have? And so as long as the accuracy is better than roughly random, like, I'm okay with this. I've inspected the outputs of these, and like, they're almost always correct. If you ask the model to judge these things in the right way, they're very good at being able to tell this. And so, yeah, I probably think this is a useful thing for people to do.

Alessio [00:43:04]: You complain about prompting and being lazy and how you do not want to tip your model and you do not want to murder a kitten just to get the right answer. How do you see the evolution of like prompt engineering? Even like 18 months ago, maybe, you know, it was kind of like really hot and people wanted to like build companies around it. Today, it's like the models are getting good. Do you think it's going to be less and less relevant going forward? Or what's the minimum valuable prompt? Yeah, I don't know.

Nicholas [00:43:29]: I feel like a big part of making an agent is just like a fancy prompt that like, you know, calls back to the model again. I have no opinion. It seems like maybe it turns out that this is really important. Maybe it turns out that this isn't. I guess the only comment I was making here is just to say, oftentimes when I use a model and I find it's not useful, I talk to people who help make it. The answer they usually give me is like, you're using it wrong. Which like reminds me very much of like that you're holding it wrong from like the iPhone kind of thing, right? Like, you know, like I don't care that I'm holding it wrong. I'm holding it that way. If the thing is not working with me, then like it's not useful for me. Like it may be the case that there exists a way to ask the model such that it gives me the answer that's correct, but that's not the way I'm doing it. If I have to spend so much time thinking about how I want to frame the question, that it would have been faster for me just to get the answer. It didn't save me any time. And so oftentimes, you know, what I do is like, I just dump in whatever current thought that I have in whatever ill-formed way it is. And I expect the answer to be correct. And if the answer is not correct, like in some sense, maybe the model was right to give me the wrong answer. Like I may have asked the wrong question, but I want the right answer still. And so like, I just want to sort of get this as a thing. And maybe the way to fix this is you have some default prompt that always goes into all the models or something, or you do something like clever like this. It would be great if someone had a way to package this up and make a thing I think that's entirely reasonable. Maybe it turns out that as models get better, you don't need to prompt them as much in this way. I just want to use the things that are in front of me.

Alessio [00:44:55]: Do you think that's like a limitation of just how models work? Like, you know, at the end of the day, you're using the prompt to kind of like steer it in the latent space. Like, do you think there's a way to actually not make the prompt really relevant and have the model figure it out? Or like, what's the... I mean, you could fine tune it

Nicholas [00:45:10]: into the model, for example, that like it's supposed to... I mean, it seems like some models have done this, for example, like some recent model, many recent models. If you ask them a question, computing an integral of this thing, they'll say, let's think through this step by step. And then they'll go through the step by step answer. I didn't tell it. Two years ago, I would have had to have prompted it. Think step by step on solving the following thing. Now you ask them the question and the model says, here's how I'm going to do it. I'm going to take the following approach and then like sort of self-prompt itself.

Swyx [00:45:34]: Is this the right way?

Nicholas [00:45:35]: Seems reasonable. Maybe you don't have to do it. I don't know. This is for the people whose job is to make these things better. And yeah, I just want to use these things. Yeah.

Swyx [00:45:43]: For listeners, that would be Orca and Agent Instruct. It's the soda on this stuff. Great. Yeah.

Alessio [00:45:49]: That's a few shot. It's included in the lazy prompting. Like, do you do a few shot prompting? Like, do you collect some examples when you want to put them in? Or...

Nicholas [00:45:57]: I don't because usually when I want the answer, I just want to get the answer. Brutal.

Swyx [00:46:03]: This is hard mode. Yeah, exactly.

Nicholas [00:46:04]: But this is fine.

Swyx [00:46:06]: I want to be clear.

Nicholas [00:46:06]: There's a difference between testing the ultimate capability level of the model and testing the thing that I'm doing with it. What I'm doing is I'm not exercising its full capability level because there are almost certainly better ways to ask the questions and sort of really see how good the model is. And if you're evaluating a model for being state of the art, this is ultimately what I care about. And so I'm entirely fine with people doing fancy prompting to show me what the true capability level could be because it's really useful to know what the ultimate level of the model could be. But I think it's also important just to have available to you how good the model is if you don't do fancy things.

Swyx [00:46:39]: Yeah, I would say that here's a divergence between how models are marketed these days versus how people use it, which is when they test MMLU, they'll do like five shots, 25 shots, 50 shots. And no one's providing 50 examples. I completely agree.

Nicholas [00:46:54]: You know, for these numbers, the problem is everyone wants to get state of the art on the benchmark. And so you find the way that you can ask the model the questions so that you get state of the art on the benchmark. And it's good. It's legitimately good to know. It's good to know the model can do this thing if only you try hard enough. Because it means that if I have some task that I want to be solved, I know what the capability level is. And I could get there if I was willing to work hard enough. And the question then is, should I work harder and figure out how to ask the model the question? Or do I just do the thing myself? And for me, I have programmed for many, many, many years. It's often just faster for me just to do the thing than to figure out the incantation to ask the model. But I can imagine someone who has never programmed before might be fine writing five paragraphs in English describing exactly the thing that they want and have the model build it for them if the alternative is not. But again, this goes to all these questions of how are they going to validate? Should they be trusting the output? These kinds of things.

Swyx [00:47:49]: One problem with your eval paradigm and most eval paradigms, I'm not picking on you, is that we're actually training these things for chat, for interactive back and forth. And you actually obviously reveal much more information in the same way that asking 20 questions reveals more information in sort of a tree search branching sort of way. Then this is also by the way the problem with LMSYS arena, right? Where the vast majority of prompts are single question, single answer, eval, done. But actually the way that we use chat things, in the way, even in the stuff that you posted in your how I use AI stuff, you have maybe 20 turns of back and forth. How do you eval that?

Nicholas [00:48:25]: Yeah. Okay. Very good question. This is the thing that I think many people should be doing more of. I would like more multi-turn evals. I might be writing a paper on this at some point if I get around to it. A couple of the evals in the benchmark thing I have are already multi-turn. I mentioned 20 questions. I have a 20 question eval there just for fun. But I have a couple others that are like, I just tell the model, here's my get thing, figure out how to cherry pick off this other branch and move it over there. And so what I do is I just, I basically build a tiny little agency thing. I just ask the model how I do it. I run the thing on Linux. This is what I want a Docker for. I spin up a Docker container. I run whatever the model told me the output to do is. I feed the output back into the model. I repeat this many rounds. And then I check at the very end, does the git commit history show that it is correctly cherry picked in this way? And so I have a couple of these. I agree that I have many fewer than what I actually use them for. And I think the reason why is just that it's hard to evaluate this. Like it's more challenging to do this kind of evaluation. I would like to see a lot more of these kinds of things to exist so that people could come up with these evals that more closely measure what they're actually doing.

Alessio [00:49:34]: Just before we wrap on this, there was one example about a UU encode. And you mentioned how nobody uses this thing anymore. When you run into something like this and you know that no more data is going to get produced on this thing, do you figure out how to fine tune the model if it really mattered to you? Put together some examples, or would you just say, hey, the model just doesn't do it, whatever, move on? Yeah.

Nicholas [00:49:59]: This was an example of a thing where I was looking at some data that was a file that was produced in like the mid-90s, early 90s or something, when UU encoding was actually a thing that people would do. And I wanted the model to be able to automatically determine the type of file to decompress

Swyx [00:50:18]: in something.

Nicholas [00:50:18]: And it was doing it correctly for like 99% of cases. And I found a few UU encoded things where it couldn't figure out this was UU encoding, not base 64. OK. This is not important. I just was curious if it could do it. And so I put this as a thing. I think probably this is a thing that if you really cared about this task being solved well, you would train a model for. But again, this is one of these kinds of tasks that this was some dumb project that no one's going to care about. I just wanted to see if I could do it. If the model was good enough that it gets me 90% of the way there, good, like done. I figured it out. Like I can sort of have fun for a couple hours and then move on. And that's all I want. I was not like, if I ever had to train a thing for this, I was not going to do it. And so it did well enough for me that I could move on.

Swyx [00:50:57]: It does give me an idea for adversarial examples inside of a benchmark that are basically canaries for overtraining on the benchmark. Typically, right now, benchmarks have canary strings. If you ask it to repeat back the string and it does, then it's trained on it. But, you know, it's easy to filter out those things. But the benchmarks, you put in some things, some questions that are intentionally wrong. And if it gives you the intentionally wrong answer, then you know it's. Yeah, there are actually

Nicholas [00:51:20]: a couple of papers that don't do exactly this, but that are doing dataset inference. This is a field of work called membership inference. This is one of the things I do research on that tries to figure out, did you train on this example or not? Yeah, there's a field called like dataset inference. Did you train on this dataset or not? And there's like a specific subfield of this that looks specifically at, like, did you train on your test set or you train on your training set? And they basically look at exactly this.

Swyx [00:51:47]: Like, for example,

Nicholas [00:51:47]: one, there's this paper by Tatsu out of Stanford where they check if the order that the specific questions happen to be in matters. And if the answer is yes, then you probably trained on it

Swyx [00:51:59]: because the order of the questions

Nicholas [00:51:59]: is arbitrary and shouldn't matter.

Swyx [00:52:01]: There are a number of papers

Nicholas [00:52:01]: that follow up on this and do some similar things. I think this is a great way of doing this now.

Swyx [00:52:06]: It might be even better

Nicholas [00:52:06]: if some people included some canary questions in their benchmarks. But even if they don't, you can already sort of start getting at this now.

Swyx [00:52:13]: Yeah.

Nicholas [00:52:13]: Yeah, let's go into

Alessio [00:52:14]: some of your research. I always love security work. I was at Black Hat last week. I had to miss DEF CON. Let's start from the LAION 400M data poisoning. So basically the idea is, you know, LAION 400M is one of the biggest image datasets for image models. And a lot of the image gets pulled from live domains. So it's not all, yeah.

Nicholas [00:52:38]: Every image gets pulled from a live domain, yes. So it's not all stored.

Alessio [00:52:40]: And a bunch of the domains expired. So then you went on and you bought the domains and you got to put literally anything on it. And you got to poison every single model that was training on the dataset.

Nicholas [00:52:51]: Yep, it was a lot of fun.

Alessio [00:52:52]: Maybe just talk about some of the things that people don't think about when it comes to like the datasets.

Swyx [00:52:57]: We talked before

Alessio [00:52:57]: about low background tokens. So before maybe 2020, you can imagine most things you get from the internet a human wrote or like, you know, after 2021, you can imagine most things written are like somewhat AI generated. Any other fun stories? So like maybe give more of the LAION background. How did you figure out? Do you just like check all the domains in it and see what expire? Why do they not do it?

Nicholas [00:53:20]: Yeah, so why did the paper happen? The adversarial machine learning literature for a very long time was focused on what could I do in the worst case? Because no one was using these tools and no one's using them. It doesn't make sense to really ask, like, how do I attack this actual system? And so people would write papers or me included. I have lots of these that like assume an adversary could do the following and then list 10 unrealistic things. Then very bad harm could happen. And in some sense, like, you have to do this. If you have no real system in front of you,

Swyx [00:53:53]: like what are you going to do

Nicholas [00:53:53]: as a security researcher? One thing you could do is just nothing. You could just wait. Like this is a bad option because eventually someone's going to use these things and you would rather have a head start. So how do you get a head start? You make a guess. You say maybe future systems will do X. And then you write a paper that sort of looks at this. And then maybe it turns out that some of these are directionally correct,

Swyx [00:54:10]: some are not.

Nicholas [00:54:10]: And so, OK, so this has happened for quite some long time.

Swyx [00:54:13]: And then machine learning

Nicholas [00:54:13]: started to work. And the thing that bothered me is it seems like the adversarial machine learning community didn't then try and adapt and try and actually start studying real problems. So we very deliberately started looking, like, what are the problems that actually arise in real systems as they exist now? Like, what is the kind of paper that I could imagine writing that would be at black hat? That like a real security person would want to see, not because here's a fun thing

Swyx [00:54:39]: that you can make

Nicholas [00:54:39]: this machine learning model do, but because legitimately the easiest way to make the bad thing happen is to go after the machine learning model. So the way we decided to do this is like sort of a very, like, every time you see some new thing, you say, well, here are the bad things

Swyx [00:54:52]: that could happen.

Nicholas [00:54:52]: You know, I could try and do an evasion attack at test time. I could try and do a poisoning attack that made the model train on bad data. I could try and steal the model. I could try and steal the data. You know, the list of, like, 10 bad things you could try and make happen. And every time you see some new thing, you ask, OK, here's my list of 10 problems. Which of them are most important and relevant to this? And you just do this for every single one in the list. And, you know, most of the time the answer is nothing. And you just, then you get nothing out of it.

Swyx [00:55:14]: But, like, on occasion,

Nicholas [00:55:14]: you sort of figure out, OK, here's this new data set. It is being distributed in such a way that anyone in the world can buy domains that let them inject arbitrary images into the data set. There's the attack.

Swyx [00:55:25]: And, like, you know,

Nicholas [00:55:25]: this is, I think, the way that we came to doing this from this motivation of let's try and look at some real security stuff.

Alessio [00:55:32]: I think when people think of AI security, they either think of jailbreaks, you know, which is kind of, like,

Swyx [00:55:38]: very limited,

Alessio [00:55:38]: or they kind of go the broader, oh, is AI going to kill us all? I think you've done a lot of awesome papers on, like, the in-between. So one thing is the jailbreak. Like, you've also had a paper on stealing part of a production LLM. You extracted, like, the Babbage and Ada, like, dimension layers from, like, the OpenAI API. So there's even things that, like, as a user, you're worried about the jailbreaks. But, like, as a model provider, you're actually worried about...

Nicholas [00:56:04]: Yeah, exactly. This paper was, again, with the exact same motivation. So as some history, there's this field of research called model stealing. What it's interested in is you have your model that you have trained.

Nicholas [00:56:13]: It was very expensive. I want to query your model and steal a copy of the model so that I have your model without paying for the training costs. And we have some very nice work that shows that this is possible. Like, I can steal your exact model as long as your model has, let's say, a couple thousand neurons evaluated in Float64 with value-only activation, fully connected networks. I see the full logic outputs, and I can feed in arbitrary floating point 64 numbers and inputs.

Swyx [00:56:39]: Each of these assumptions

Nicholas [00:56:39]: I've just said is false in practice. Like, none of these things are things you can really do. I think it's fun research. I mean, there's a reason the paper is at Crypto. The reason it's at Crypto and not at an actual security conference because it's a very theoretical kind of thing. And I think it's an important direction for people to think about because maybe you can extend these to make it be possible. But I also think it's worth thinking about the problem from the other direction. Let's look at what the real models we have in front of us are. Let's see how we can make those models be vulnerable to stealing attacks. And then we can push from the other direction. Let's take the most practical attacks and make them more powerful. And that's, again,

Swyx [00:57:11]: what we're trying to do here.

Nicholas [00:57:12]: We looked at what APIs do actually people expose in the biggest models. How can we use some of that to do as much stealing as we possibly can? And for this, we ran the attack that let us stole several of OpenAI's models with their permission. It's a fun email to send. Hello, Mr. Lawyer. Sorry, Google. First, I have to email them. Hello, Google Lawyer. I would like to steal OpenAI's models. And they say, under no circumstances. And you say, OK, what if they agree to it? And they're like, if they agree to it, fine. And then you say, I know some people there. I email them, like, can I steal your model? And they're like, as long as you delete it afterwards, OK. And I'm like, can you get your general counsel to put that in writing? And they're like, sure. So we had all of the lawyers talk to each other. Everyone agreed that it's important to do this. You don't want to actually cause harm when doing security work. And so we got all of the agreements out of the way. And then we went and ran the attack. And yeah, it worked great. And then we can write the paper. Before we put the paper online, we notified everyone who was vulnerable to this attack. Some Google models were vulnerable. Some OpenAI models were vulnerable. There were one or two other people who were vulnerable that we didn't name in the paper. We notified them all, gave them 90 days to fix it, which is like a standard disclosure period in security. That was all patched. OpenAI got rid of some APIs. And then we put the paper online.

Swyx [00:58:32]: The fix was just don't show logits.